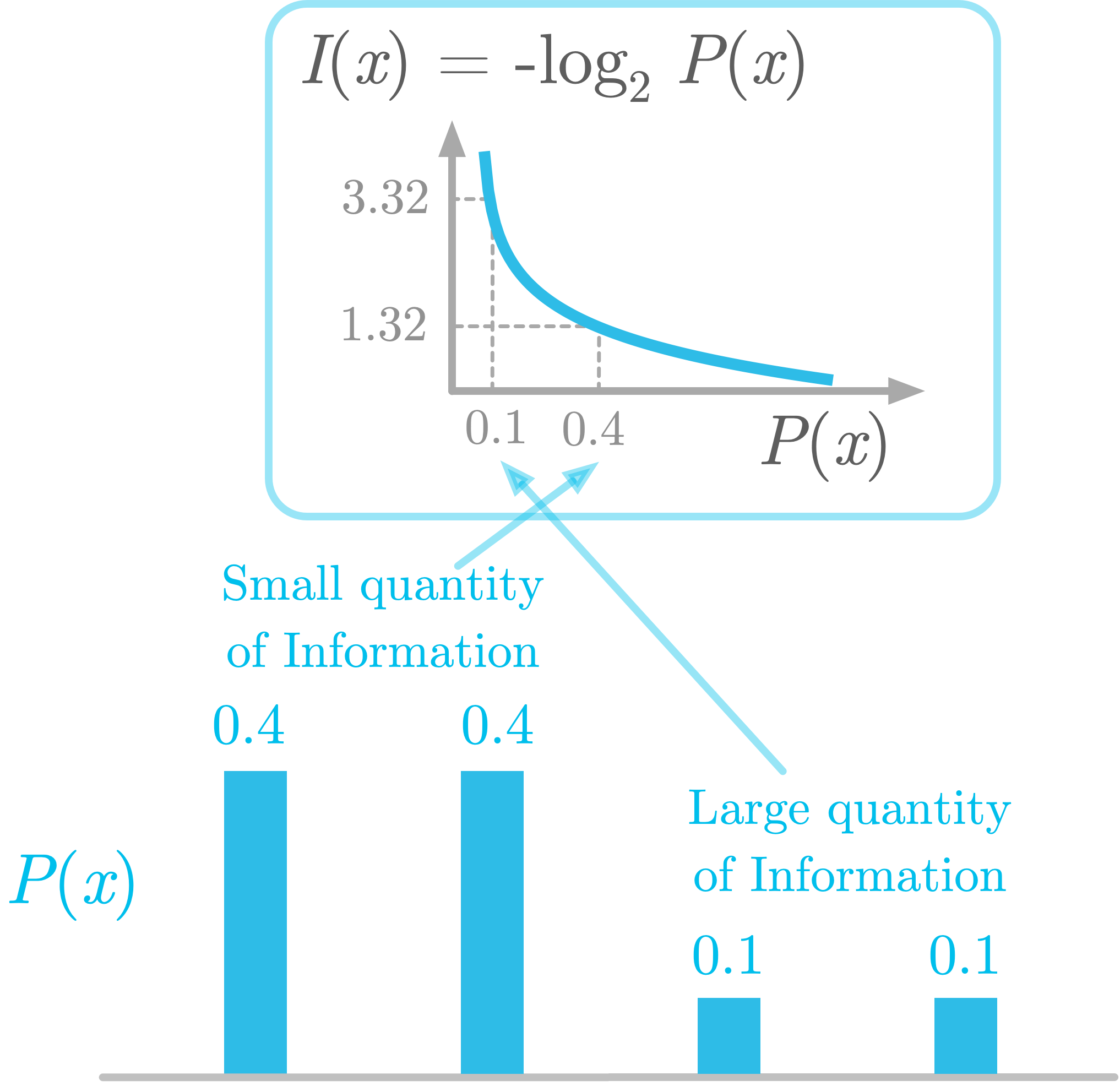

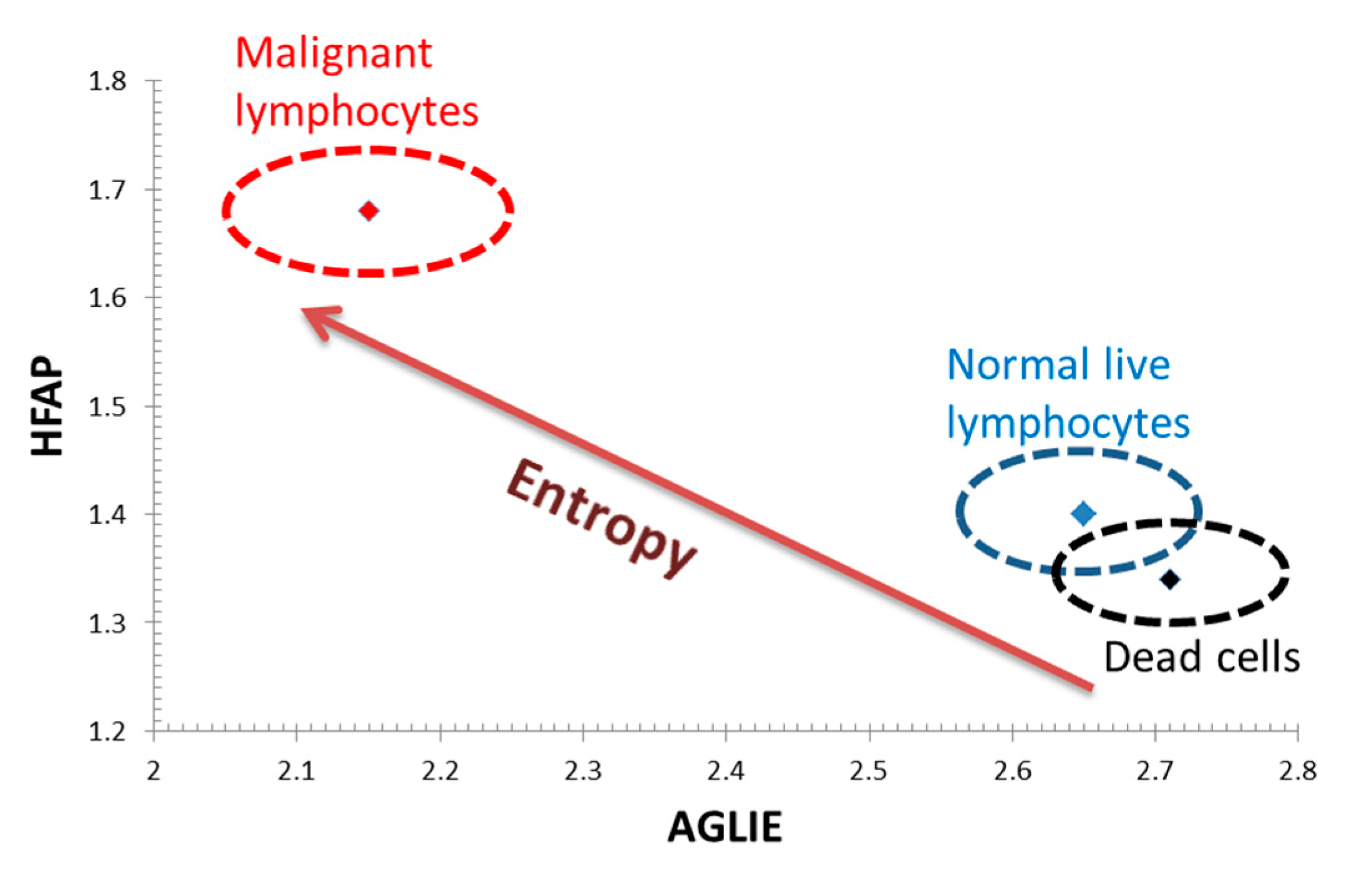

H ( x ) = ∑ i = 1 n p ( i ) log 2 ( 1 p ( i ) ) = − ∑ i = 1 n p ( i ) log 2 p ( i ). Over the last few years, entropy has become an adequate trade-off measure in image, video, and signal processing. Shannon defines entropy in terms of a discrete random event x, with possible states (or outcomes) 1. The concept of information entropy was introduced by Claude Shannon in his 1948 paper, A Mathematical Theory of Communication. Elsewhere in statistical mechanics, the literature includes references to von Neumann as having derived the same form of entropy in 1927, so it was that von Neumann favoured the use of the existing term 'entropy'. (Note: Shannon/Weaver make reference to Tolman ( 1938) who in turn credits Pauli ( 1933) with the definition of entropy that is used by Shannon.

We should be able to make the choice (in our example of a letter) in two steps, in which case the entropy of the final result should be a weighted sum of the entropies of the two steps.If all the outcomes (letters in the example above) are equally likely then increasing the number of letters should always increase the entropy.The method proposed here involves computing a so-called Information Entropy (IE) Spectrum of the. The main objective is to implement this technique for studying full size genomes. changing the value of one of the probabilities by a very small amount should only change the entropy by a small amount. Using the concept of information entropy to study genome mutations has been briefly demonstrated in the previous section, for a small genome subset of 34 characters. The measure should be proportional (continuous) - i.e.Shannon offers a definition of entropy which satisfies the assumptions that: Entropy is a measure of this randomness, suggested by Shannon in his 1948 paper " A Mathematical Theory of Communication". On the other hand, since we cannot predict what the next character will be: it is, to some degree, 'random'. Entropy is defined as lack of order and predictability, which seems like an apt description of the difference between the two scenarios. 'e'), the string of characters is not really as random as it might be. 'z'), while other letters are very common (e.g.

Since letter frequency for some characters is not very high (e.g. An alternative way to look at this is to talk about how much information is carried by the signal.įor example, consider some English text, encoded as a string of letters, spaces, and punctuation (so our signal is a string of characters). The concept of entropy in information theory describes with how much randomness (or, alternatively, 'uncertainty') there is in a signal or random event. 6 Deriving continuous entropy from discrete entropy: the Boltzmann entropy.

We grow and process our own product right here in Michigan, controlling.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed